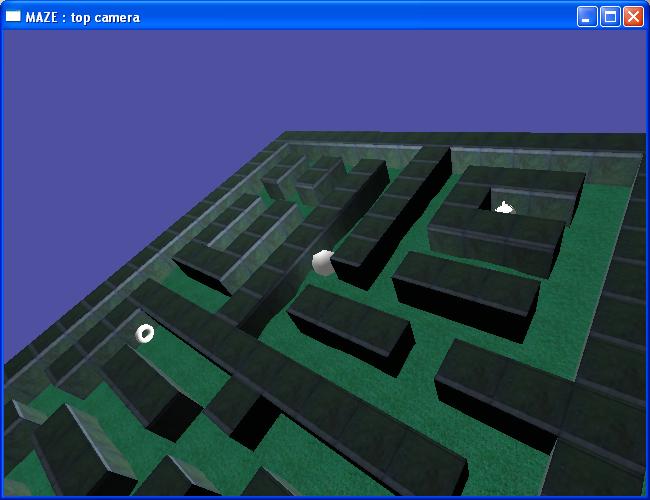

Today, my first XBox Game has been published on the Live marketplace! It was definitely a great experience, and I am very grateful to all the playtesters and reviewers from the creators club. However, my submission took a lot of time, and that could have been avoided if I were a little more cautious. So in this post, I’ll try to give you some tips to make the publishing process smoother.

If you don’t know what I’m talking about, you should take a look to this introduction to game dev for XBox360 using XNA.

Ok, you made an XNA game, and you’d like to sell it on the marketplace. First, you’ll need a premium subscription to creators.xna.com. It will allow you to present your game for playtest and for review. Then, if you want everything to go smooth:

Follow strictly the microsoft guidelines.

The reviewers will also base their judgement on those guidelines. While some points can be discussed, most of them will be a fail reason if you don’t follow them. Here is a list of the common things to think carefully before you can submit your game:

- – Pause your game if the player disconnect his pad, or if he displays any kind of guide.

- – Write carefully your load/save code. Save asynchronously, and think about the players having an hard disk and a MU.

- – Do NOT suppose your player will use only the first controller.

- – Use fonts/sprites big enough to look OK even on SD screens

- – Running your game on XBox IS different than running it on your computer. Expect a bit of optimization, and think about the off-screen areas on TVs

- – Avoid crashes at any cost (easy :-))

Have a decent playtest session

After you submit your game for playtest, you should get many feedbacks from other developpers. It’s always a pain to work again on something you considered as finished, but if you listen to their advices, you’ll save yourself a lot of time for the review. And just think about the price a testing team would cost you if you had to pay them!

Think carefully about “presentation”

Before you submit for review, you should work a little bit on the images, screenshots and descriptions that will represent your game. The screenshot are important to make people actually willing to review, and ultimately play your game. Same for the jacket and icon.

The description, however, is a bit tricky : I recommend you to set a description only in the language you localized your game. For instance, if you submit a game in english only, don’t write a french description. (this is what I did for ArkX, and understood only recently it was a mistake). Your game will have to be reviewed by people who speaks the languages you set. So if you set a french, spanish and italian description, you’ll need to be reviewed by ppl speaking spanish/english, italian/english, and french/english. The amount of this kind of reviewer being much lower than english reviewers, you may get stuck because of a lack of review in a particular language. So just set a description in a language you translated your game in before submitting your game for review.

Don’t take review personnally

This point isn’t a particularity of XNA games. Developpers have quite often a deep relationship with their own code, and take the critics very personnally. If someone fails your game, it means there WAS a fail reason. It can be discussed, and your game won’t be rejected only for a fail. On the other hand, if someone else fails your game, (2 fails will result in a reject), it merely means you should fix things, and submit again. Of course, easier said than done, but ArkX was rejected 4 times before approval, and at some point, I was even wondering if it was worth to keep working. But then, after reading posts on forums, I could see the reviewers were only doing what was asked to them. And it saves a lot of time when someone tells you where the problem is. (this point is from my own experience of the reviewing process, so it may not fit to anyone)

Do your bit of community

The playtester and reviewers are developpers like yourself. It will strongly help your submission if you review some game as well. I didn’t understand this at first, but I could get more review after I started to be a bit more active on reviewing and on the community forums. And some games are really fun to review, so don’t hesitate!

In any case, good luck for your submission, and trust me, it’s worth your efforts! (euphory from the approval mail didn’t leave me yet)